Build Swarm Part 3: Teaching Drones to Heal Themselves

A drone disappears at 2 AM. No error. No goodbye. Just… gone.

The orchestrator keeps waiting. The package queue stalls. I wake up to a build that’s been stuck for six hours because drone-Tarn ran out of memory compiling dev-qt/qtwebengine and silently choked to death.

This kept happening. Different drones, different failures. Kernel panics. Network blips. Disk pressure. The result was always the same: a drone vanishes, the orchestrator has no idea, and packages sit in limbo until I manually intervene.

I got tired of being the swarm’s immune system.

The Silent Failure Problem

The Build Swarm was designed around a simple contract: the orchestrator assigns work, drones compile it, drones report back.

Except the contract assumed drones would always report back. In the real world, drones fail in the middle of things. They don’t send a polite “I’m dying” message. They just stop responding.

The symptoms were brutal:

Build Progress:

Needed: 0

Building: 3

Complete: 146

Failed: 0Zero needed, zero failed, three stuck “building” forever. Those three packages were assigned to a drone that had been dead for four hours. The swarm looked 98% done but would never finish without me SSH’ing in and kicking things.

Distributed systems don’t fail gracefully by default. You have to teach them how.

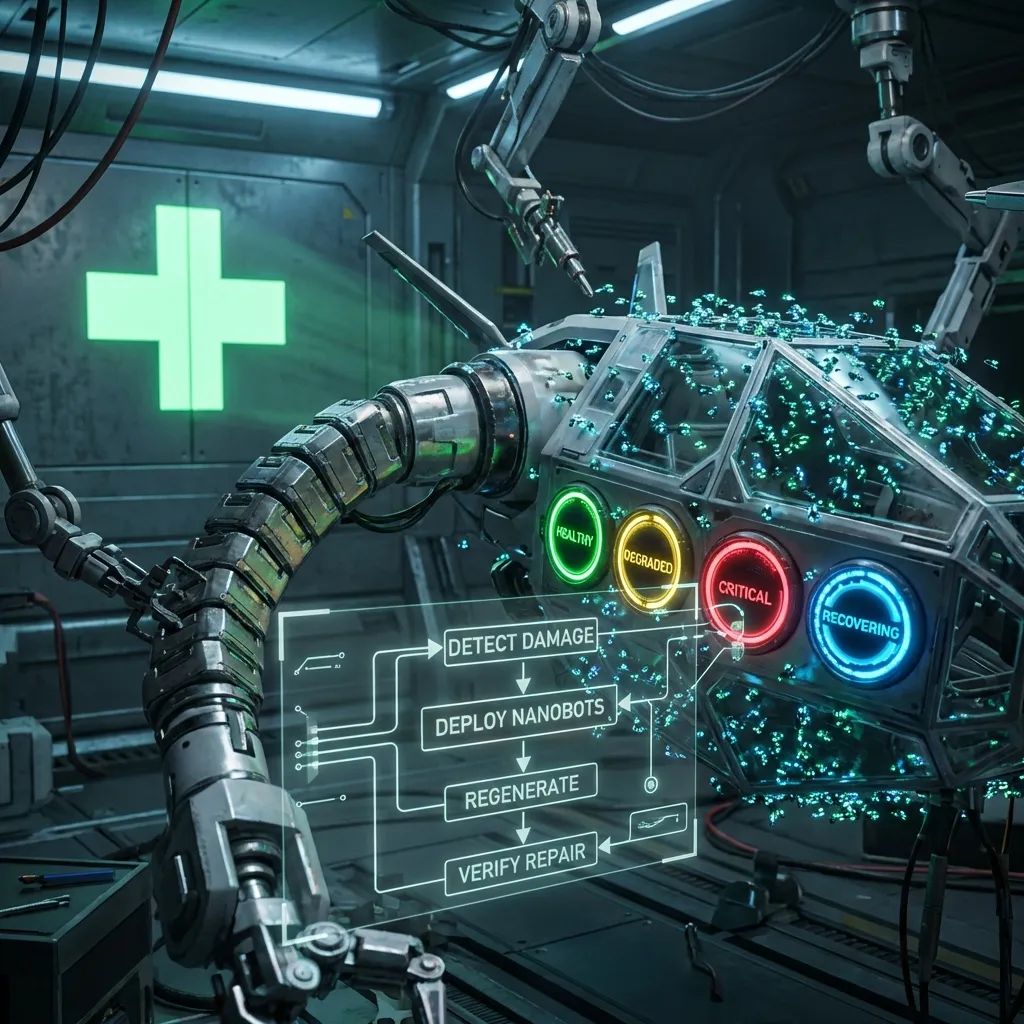

Health States: Knowing When You’re Sick

The first thing I needed was self-awareness. Drones needed to know they were in trouble before they crashed.

I added a health state machine to every drone:

class DroneHealth(Enum):

HEALTHY = "healthy" # All systems nominal

DEGRADED = "degraded" # Warning signs present

CRITICAL = "critical" # Unable to compile reliably

RECOVERING = "recovering" # Coming back from failureEvery 15 seconds, the drone checks itself. Memory usage. Disk space. Load average. Recent build success rate.

HEALTHY — Memory under 85%, disk under 90%, builds completing normally. The drone accepts work.

DEGRADED — Memory climbing past 85%. Disk over 90%. The last three builds took twice as long as expected. Something is wrong but not catastrophic. A degraded drone stops accepting new packages but finishes what’s on the workbench.

CRITICAL — Repeated build failures. Out of memory kills. The emerge process crashing. The drone enters a defensive posture — stops everything and starts returning work to the orchestrator.

RECOVERING — After coming back from critical. Runs a self-check sequence: verify portage tree is intact, confirm disk space, test that emerge --info returns clean output. Only after passing does it transition back to HEALTHY.

HEALTHY ──[warning thresholds]──> DEGRADED

DEGRADED ──[continued degradation]──> CRITICAL

CRITICAL ──[conditions improve]──> RECOVERING

RECOVERING ──[self-check passes]──> HEALTHYThe goal isn’t perfection. It’s awareness. A drone that knows it’s sick can do something about it. A drone that doesn’t just dies silently and takes its assigned packages into the void.

Graceful Work Return: The Key Innovation

This was the part that changed everything.

Previously, when a drone failed, its assigned packages were just… lost. The orchestrator had to time out (which took ages) or I had to manually reclaim them. Now, a drone in trouble actively hands its work back.

A drone detects it’s entering CRITICAL state. Instead of crashing, it pauses new work, finishes whatever is mid-compile if stable enough, then sends every uncompleted package back to the orchestrator:

def return_work(self, reason: str):

"""Return all uncompleted packages to orchestrator."""

for pkg in self.local_queue:

if pkg.status != 'complete':

self.orchestrator.return_package(

package=pkg.atom,

drone_id=self.drone_id,

status='returned',

reason=reason

)

self.local_queue.clear()On the orchestrator side, returned packages go back into the main queue. But there’s a twist — the orchestrator records which drone returned them. When reassigning, it avoids sending the same package back to the drone that just gave up on it.

This prevents a nasty loop where a package that causes OOM on drone-Tarn (14 cores, 16GB RAM) keeps getting reassigned to drone-Tarn and failing over and over. Instead, it gets routed to drone-Meridian (24 cores, 64GB RAM) which can handle the memory-hungry compile without breaking a sweat.

The result: packages find the drone that can actually build them. Work migrates naturally toward capable hardware. No manual intervention. No stale queues.

The Grounding Incident

I deployed v2.5.0 with the self-healing system to all five drones. Felt good about it. The code was clean, the logic was sound, the tests passed.

Next morning I checked the monitor.

drone-Izar: GROUNDED

drone-Tarn: GROUNDED

drone-Meridian: GROUNDED

drone-Tau-Beta: GROUNDED

sweeper-Capella: GROUNDEDEvery single drone had been grounded. The orchestrator had locked them all out.

My beautiful self-healing system had detected that every drone was failing, correctly grounded them all to prevent further damage, and then had nothing left to assign work to. The swarm was technically functioning perfectly — protecting itself from bad drones — except all the drones were “bad” and no packages were being built.

Helpful.

The root cause was embarrassingly mundane. The build queue was stale. It had been generated days earlier when the portage tree listed sys-apps/systemd-utils-6.22.0. But my desktop still had version 6.20.0 installed. The dependency resolver kept trying to build packages against 6.22.0, but the emerge environment couldn’t reconcile the slot conflicts.

Every drone was hitting the same wall:

!!! Multiple package instances within a single package slot have been pulled

!!! into the dependency graph, resulting in a slot conflict:

sys-apps/systemd-utils:0

(sys-apps/systemd-utils-6.22.0 pulled in by ...)

(sys-apps/systemd-utils-6.20.0 installed)Five failures and you’re grounded. Every drone hit five failures within minutes.

The fix was one command:

build-swarm freshNuked the stale queue. Resynced portage across all nodes. Rediscovered packages from scratch. Within ten minutes, every drone was ungrounded and actively building.

Stale state is the silent killer of distributed systems. The self-healing code was doing exactly what it should — protecting the swarm from repeated failures. The bug wasn’t in the self-healing. It was in the state I fed it. Garbage in, grounded out.

The Tailscale Connectivity Detour

While debugging the grounding issue, I noticed drone-Tarn was genuinely unreachable — not grounded, just offline. The tailscaled daemon wasn’t running.

The init script depended on net.lxcbr0 — the LXC bridge interface. The bridge existed, but the init system was trying to create it again on boot:

Cannot enslave a bridge to a bridgeSo net.lxcbr0 failed to start, which meant tailscaled never started, which meant the Andromeda-network drones dropped off the mesh entirely. They were running fine locally on 192.168.20.x but invisible to the orchestrator.

Your fancy application-level recovery means nothing if the network layer is broken underneath it. Recovery has to happen at every layer.

What I Actually Learned

Self-healing isn’t about preventing failures. It’s about making failures recoverable. Drones will always crash. Memory will always run out. Networks will always hiccup. The question is whether the system can put itself back together without me waking up at 3 AM.

Graceful work return is worth more than any amount of monitoring. Knowing a drone is dead is useful. Having the drone hand its work back before it dies is transformative.

Stale state will activate your safety systems against you. When your protection mechanisms are working perfectly but your inputs are garbage, you get a swarm that protects itself into total paralysis. Always validate state freshness before trusting automated decisions.

One command should fix the common case. build-swarm fresh became the reset button. Having a nuclear option that reliably returns you to a known-good state is worth more than trying to make incremental recovery handle every edge case.

The self-healing system is running in production now. Drones occasionally enter DEGRADED, shed some work, and recover on their own. The orchestrator routes work around struggling nodes. Packages find their way to hardware that can build them.

Most of the time, I don’t even notice it working. That’s the point.

Next up: Part 4 — From Chaos to Production — Cron jobs that weren’t, circuit breakers that were, and the week the swarm became production-ready.